Why Artificial Intelligence Is Actually Not Intelligent

Artificial intelligence is on everyone’s lips. It generates impressive images, writes poems, programs code, and holds seemingly effortless conversations. Progress, especially with large language models (LLMs), is rapid, and it’s easy to attribute a form of intelligence or even consciousness to these systems. We are fascinated by their capabilities and begin to dream of—or fear—a “superintelligence.”

But scratch the surface of this glittering facade, and a completely different truth is revealed: By human standards, today’s AI is fundamentally “stupid.”

This provocation is not intended to diminish the technological achievement. Rather, it aims to ground the hype and clarify what we are actually dealing with. Here are the reasons why AI in its current form is far from true intelligence.

AI “understands” nothing – it’s a statistical parrot.

Perhaps the biggest misconception is the assumption that AI “understands” the world. When a large language model writes a coherent text about climate change, it has no concept of “Earth,” “temperature,” or “consequence.”

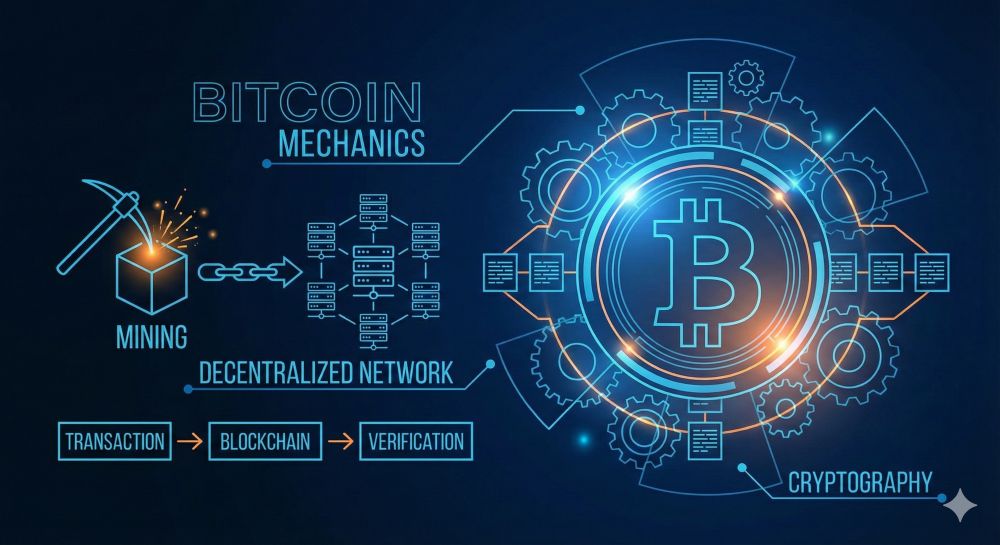

What’s really happening: These models are gigantic pattern recognition machines. They have been trained with unimaginable amounts of data (the entire internet, books, articles). Their sole task is to predict the statistically most probable next word in response to an input (prompt). Word for word.

It’s not thinking, it’s probability: The AI doesn’t “know” that Paris is the capital of France. It only knows that after the sentence “The capital of France is…” the word “Paris” follows with an extremely high probability because it has seen this word combination millions of times in its training data.

The “stochastic parrot”: This term aptly describes the phenomenon. The AI parrots what it has learned without understanding the semantic content – the meaning – of the words.

The total lack of common sense

True intelligence is based on a foundation we call “common sense.” This is a vast, implicit knowledge about the world that we learn through physical experience and social interaction.

A toddler learns quickly: Objects fall down. Water is wet. You can throw a ball, but not a house. An AI learns none of this unless it has been explicitly described in text form.

Example: Ask an AI if an elephant will fit in a normal refrigerator. It might answer: “No, an elephant is much too big for a refrigerator.” That sounds clever. But ask it why a fish can’t win a bicycle race, and it will stumble. It doesn’t “understand” what a fish is, what a bicycle is, or what “winning” means in a physical context. It lacks the model of the world.

“Hallucinations”: Self-conscious lying without intent

One particularly “stupid” characteristic of AI is its tendency to “hallucinations.” If a model cannot find an answer in its data or the statistical patterns are unclear, it simply invents information.

The problem: The AI presents these fabricated facts, quotes, or sources with the same unshakeable authority as correct information.

- A human would say, “I don’t know.”

- An AI invents a plausible but completely false answer.

This ability to lie eloquently and confidently (without understanding the intent or concept of a lie) is a direct product of its “stupidity”—its inability to distinguish between facts and statistically plausible nonsense.

Bias and uncritical regurgitation (garbage in, garbage out)

An AI has no morals, no ethics, and no critical thinking skills. It is a mirror of the data it was trained on.

This means: If the training data contains racist, sexist, or otherwise problematic biases (which is inevitable when mapping the internet), the AI will reproduce these biases. It cannot reflect and decide, “That’s a bad view; I shouldn’t reproduce it.”

It is uncritical. It takes everything at face value. This unreflective repetition of human errors is a clear sign of a lack of intelligence.

Context blindness and “brittleness”

AI systems are often “brittle.” They can perform a specific task brilliantly, but fail spectacularly with a slight change in context or input.

Irony and sarcasm: AI struggles to understand subtext, irony, or cultural nuances because these things aren’t explicitly stated in the data.

Adversarial attacks: An image recognition system that detects a cat can often be completely fooled by changing just a few pixels invisible to humans, causing it to suddenly see a “tank.” This demonstrates that the system doesn’t have a “concept” of a cat, but rather reacts to complex statistical patterns in pixels.

Conclusion: A powerful tool, but no intelligence

So, is AI useless? Absolutely not. It’s an incredibly powerful tool—perhaps the most powerful we’ve ever created. It is a computer, a pattern recognizer, an automater of inestimable value.

But we urgently need to stop anthropomorphizing it. A hammer is a fantastic tool for driving nails, but no one would call it “intelligent” or accuse it of being “stupid” because it can’t screw in screws. The “stupidity” of AI lies not in its computing power, but in our misunderstanding of what it is. It is an autopilot, not a pilot. It has no consciousness, no intentions, no understanding, and no common sense. And forgetting that is perhaps the greatest danger in dealing with it.

Beliebte Beiträge

From assistant to agent: Microsoft’s Copilot

Copilot is growing up: Microsoft's AI is no longer an assistant, but a proactive agent. With "Vision," it sees your Windows desktop; in M365, it analyzes data as a "Researcher"; and in GitHub, it autonomously corrects code. The biggest update yet.

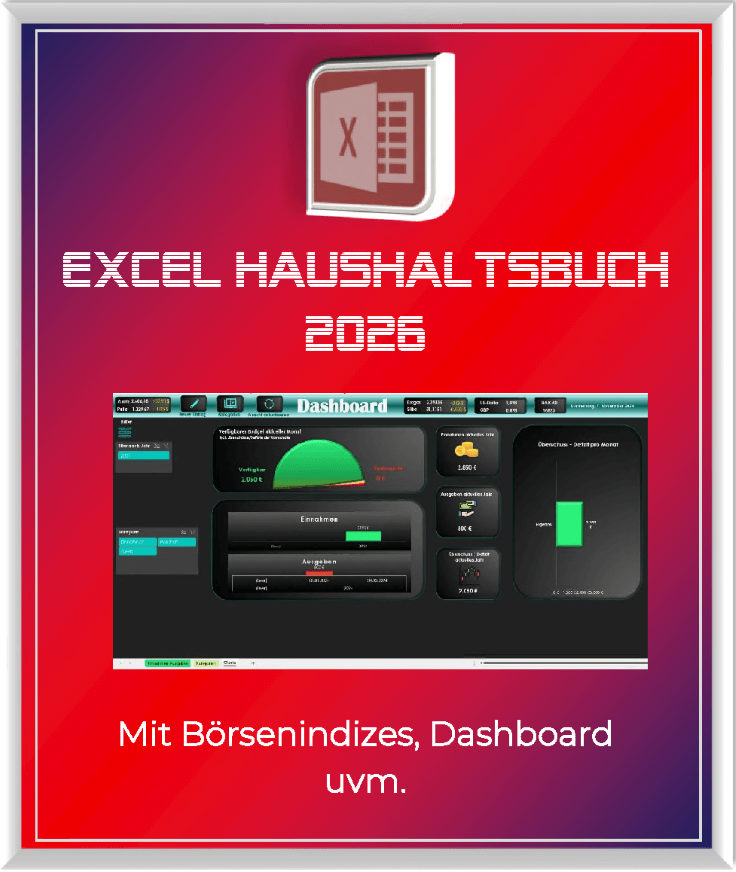

Never do the same thing again: How to record a macro in Excel

Tired of repetitive tasks in Excel? Learn how to create your first personal "magic button" with the macro recorder. Automate formatting and save hours – no programming required! Click here for easy instructions.

IMAP vs. Local Folders: The secret to your Outlook structure and why it matters

Do you know the difference between IMAP and local folders in Outlook? Incorrect use can lead to data loss! We'll explain simply what belongs where, how to clean up your mailbox, and how to archive emails securely and for the long term.

Der ultimative Effizienz-Boost: Wie Excel, Word und Outlook für Sie zusammenarbeiten

Schluss mit manuellem Kopieren! Lernen Sie, wie Sie Excel-Listen, Word-Vorlagen & Outlook verbinden, um personalisierte Serien-E-Mails automatisch zu versenden. Sparen Sie Zeit, vermeiden Sie Fehler und steigern Sie Ihre Effizienz. Hier geht's zur einfachen Anleitung!

Microsoft 365 Copilot in practice: Your guide to the new everyday work routine

What can Microsoft 365 Copilot really do? 🤖 We'll show you in a practical way how the AI assistant revolutionizes your daily work in Word, Excel & Teams. From a blank page to a finished presentation in minutes! The ultimate practical guide for the new workday. #Copilot #Microsoft365 #AI

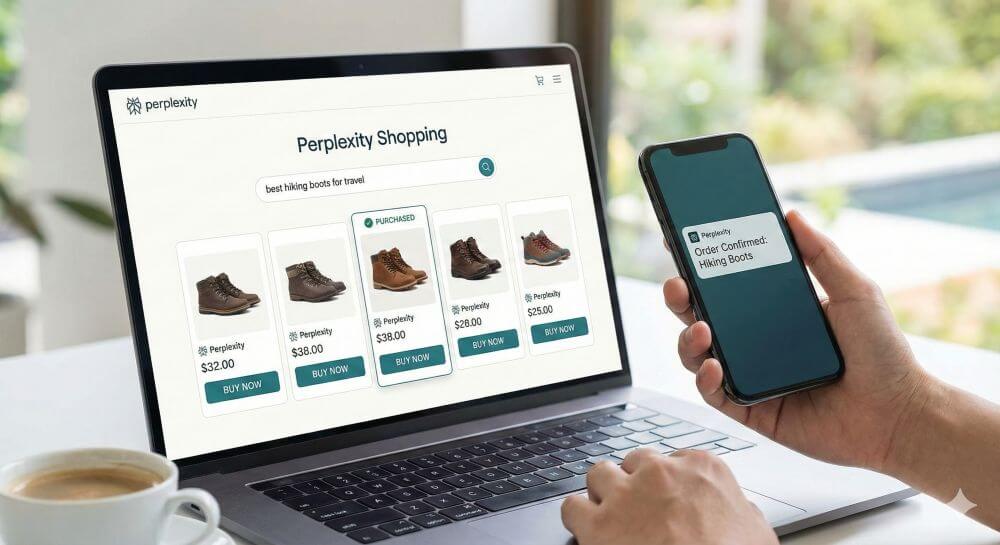

Integrate and use ChatGPT in Excel – is that possible?

ChatGPT is more than just a simple chatbot. Learn how it can revolutionize how you work with Excel by translating formulas, creating VBA macros, and even promising future integration with Office.